AMD Athlon XP 2600+: Part 2

By Van Smith

Date: August 22, 2002

At this moment we will pause from the long march through our benchmark results to revisit the significant issues regarding BAPCo's SysMark 2002 brought up by AMD during our recent meeting with representatives from that chipmaker.

Click here to read the first part of our Athlon XP 2600+ review.

We must state up front that despite the condemning information divulged to us, the AMD spokesmen repeatedly expressed support and guarded optimism for the reformation of BAPCo.

===================================

Background

For several years we have been on a campaign to educate reviewers and readers of the link between Intel and BAPCo, the parent organization of the SysMark family of commercial benchmarks. Inside the computer industry, the connection between the chipmaker and the benchmark developer is commonly acknowledged.

If fact, the head of a competing benchmark firm bluntly labeled BAPCo as a "front" for Intel. Randall Kenney, the head of CSA Research, made this statement during his presentation at Platform Conference in early 2001.

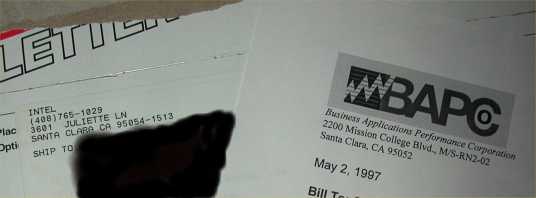

BAPCo's links to Intel were at one time more visible. This BAPCo

product was sent from Intel's internal post office.

===================================

Confirmation

As we reported in Part 1 of this review, AMD confirmed to us that it has joined BAPCo as a full member. The chipmaker undertook this surprising move with the hope that it might be able to help "correct" a benchmark that it currently characterizes as "broken."

We discovered that Intel chairs the BAPCo desktop performance committee which is the body that is responsible for SysMark. This is not much of a surprise.

Much more importantly, AMD reluctantly admitted that due to BAPCo's nature as primarily a meeting facilitator, Intel itself has been providing software engineers for the development of the SysMark products. When pressed further, the AMD representative admitted that it is likely that all SysMark development so far has been conducted internally at Intel by Intel.

===================================

Method

We have lambasted the SysMark products for their unrealistic workloads, but their scoring methods are equally atrocious. Instead of scoring each task on its own and weighting them according to usage model criteria, an individual task's importance depends on how long it takes to complete!

That's right, a task's contribution to the final SysMark2002 score is entirely derived by how long it takes to execute!

For example, a 3d rendering test that might take one second to complete would only contribute one tenth as much to the final SysMark score as a ten-second task that consists of loading an application. Moreover, tasks are often repeated many times in SysMark, skewing a particular workload even more.

Why implement such a bizarre scoring scheme? Because it naturally biases the entire benchmark towards large working sets...

...which demand memory throughput

...which is one of the Pentium 4's few strengths.

And it also allows particular tasks to be weighted more heavily through repetition.

This is not merely a bad scoring strategy, it is intrinsically biased.

AMD's example illustrating SysMark's scoring method.

===================================

Evidence

SysMark 2001 and 2002 obfuscate their specific tasks by spitting out one "dumb" number as a score for an entire suite of tests. This makes it impossible to know the relative performance of CPUs on individual tasks.

Even so, it is possible from mere observations to notice some of the strange workloads deployed in the BAPCo tests. For instance, we have cited the Excel test, which is composed almost entirely of repeated sorts over large datasets, as an example of an obviously unrealistic workload.

However, AMD has been able to "pick the lock" on SysMark to gain a much keener understanding into the internal workings of these tests.

The Athlon XP was introduced after SysMark 2001 was released, but before SysMark 2002 became available. AMD knew that the Athlon XP performed much worse compared to the P4 on SysMark 2002 than on SysMark 2001 (especially after the Windows Media Encoder patch is applied so that the program properly recognizes SSE in the Athlon XP).

Trying to understand why this scoring discrepancy existed, the chipmaker discovered that when comparing SysMark 2001 to SysMark 2002 the following patterns were evident:

Tasks were removed that favored AMD.

Tasks were added that favor Intel.

Workloads that favored Intel were repeated -- sometimes many times -- to inflate their weight under the scoring scheme described above.

An example of this is the Adobe PhotoShop component of SysMark. In the 2001 version of the BAPCo/Intel product, 13 different filters were used. On eight of these 13 filters the Athlon XP 2000+ beat the 2GHz Northwood P4.

However, in SysMark 2002 every single one of these eight filters where removed -- again, tasks where the Athlon XP beat the Pentium 4 -- and were replaced with repeated filters that the Pentium 4 executed faster than the Athlon XP.

The Athlon XP won all of the Photoshop filters marked in green. None of

these are in SysMark2002.

Another indefensible example of biasing SysMark 2002 involved the "Flash" tests. Originally, 211 of the 241 Flash tasks were "Step Frame" in SysMark 2001. The Athlon XP, which was introduced after SysMark 2001 was released, excels at "Step Frame" operations.

Remarkably, in SysMark 2002 there are now zero "Step Frame" Flash tasks.

That's right, 88% of the original Flash test tasks -- again, operations that the Athlon XP performed well -- was completely yanked out of SysMark 2002. Additionally, the Flash test file exploded in size from 118kB to 2.662MB thus stressing bandwidth and biasing this task by consuming much more time.

The Flash component of SysMark was eviscerated only to be stuffed with

P4-friendly fat for 2002.

In Access, the Athlon XP beat the Pentium 4 almost everywhere in SysMark 2001 (on our front page yesterday, we published results where a modest 1GHz VIA C3 trounced a 1.7GHz P4-Celeron in another database test -- "NetBurst" is "NetBust" when it comes to databases). For the 2002 version of the BAPCo/Intel benchmark suite, the Access test was almost completely removed!

The Pentium 4 couldn't win in Microsoft Access so the test was almost

eliminated for 2002.

AMD even touched upon the Excel component of SysMark which we have criticized in the past for its grossly distorted workload. The number two CPU producer quantified Excel's workload by discovering that sorting, a bandwidth intensive operation that is an atypical task in Excel, accounted for over 90% of the Excel application time.

In other words, over 90% of the Excel score in SysMark 2002 is determined by sort speed when sorting is not even common when using Excel!

Sorting accounts for a whopping 90% of the Excel score in the latest SysMark

test!

===================================

Lawsuit

As we have reported recently, a group of Pentium 4 owners has lodged a class action lawsuit against Intel. This group of disgruntled Intel customers charge the chipmaker with misrepresenting the true performance of the Pentium 4 in comparison with the Pentium III and Athlon processors.

AMD's discoveries covered here offer potent ammunition for conviction since the data bluntly shows that Intel's actions to mislead customers were calculated and deliberate.

To gain credibility for its covertly developed marketing tools, Intel created BAPCo as a front organization to distribute SysMark as an independently derived benchmark suite. However, Intel itself produced the tests, while safely shielding itself from suspicion (other than ours) behind BAPCo.

We write many of our own tools. As we have reported often before, it is actually very difficult to construct a set of balanced benchmarks that favor the Pentium 4 over the Athlon XP.

AMD's evidence shows clearly that after setting up BAPCo Intel then went far, far out of the way to skew SysMark -- perhaps the most widely used benchmark suite today -- to favor the Pentium 4 over competing CPU architectures.

===================================

Download

We are making the AMD presentation available for scrutiny here.

===================================

More to Come

We will continue our Athlon XP 2600+ review next week.

===================================

Pssst! We've updated our Shopping Page.

===================================